A machine learning model, that could lead a driver directly to an empty parking spot, fetched the second prize in the Graduate level: MS category at the 2018 Science and Technology Open House Competition. It goes without saying that dreams of computer systems with godlike powers and the wisdom to use them is not just a theological construct but a technological possibility. And sci-fi éminence grise Arthur C. Clarke rightfully remarked that “any sufficiently advanced technology is indistinguishable from magic.”

Artificial Intelligence (AI) may be the buzzword of our times but Machine Learning (ML) is really the brass tacks. Machine learning has made great inroads into different areas. It has the capability of looking at the pictures of biopsies and picking out possible cancers. It can be taught to predict the outcome of legal cases, writing press releases and even composing music! However, the sci-fi future where a machine learning beats a human in all the conceivable department and is perpetually learning isn’t a reality yet. So, how does machine learning fit into the world of content management system like Drupal? Before finding that out, let’s go back to the times when computers did not even exist.

Machine learning predates computers!

In this day and age, self-driving cars, voice-activated assistants and social media feed are some of the tools which are powered by machine learning. Compilations made by BBC and Forbes show that machine learning has a long timeline that relies on mathematics from hundreds of years ago and the elephantine developments in computing over the years.

Machine learning has a long timeline that relies on mathematics from hundreds of years ago and the elephantine developments in computing over the years

Mathematical innovations like Bayes’ Theorem (1812), Least Squares method for data fitting (1805) and Markov Chains (1913) laid the foundation for modern machine learning concept.

In the late 1940s, stored-program computers like Manchester Small-Scale Experimental Machine (1948) came into the picture. Through the 1950s and 1960s, several influential discoveries were made like the ‘Turing Test’, first computer learning program, first neural network for computers and the ‘nearest neighbour’ algorithm. In the nineties, IBM’s Deep Blue beat the world chess champion.

Post-millennium, we have several technology giants like Google, Amazon, Microsoft, IBM and Facebook today actively working on more advanced machine learning models. Proof of this is the Alpha algorithm, developed by Google DeepMind, which beat a professional in the Go competition and it is considered more intricate than chess!

Discovering Machine Learning

Machine learning is a form of AI that allows a system to learn from data instead of doing that through explicit programming. It is not a simple process. As the algorithms ingest training data, producing more accurate models based on that data is possible.

Advanced machine learning algorithms are composed of many technologies (such as deep learning, neural networks and natural-language processing), used in unsupervised and supervised learning, that operate guided by lessons from existing information. - Gartner

When you train your machine learning algorithm with data, the output that is generated is the machine learning model. After training, when you provide an input to the model, an output will be given to you. For instance, a predictive algorithm will build a predictive model. Then, when the predictive model is provided with the data, you receive a prediction based on the data that trained the model.

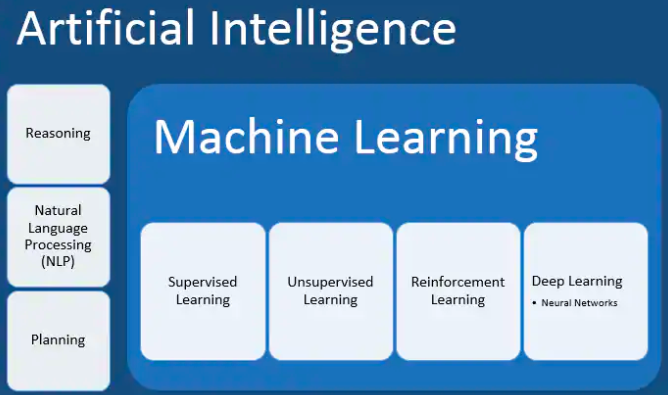

Difference between AI and machine learning

Machine learning may have relished a massive success of late but it is just one of the approaches for achieving artificial intelligence.

Forrester defines artificial intelligence as “the theory and capabilities that strive to mimic human intelligence through experience and learning”. AI systems generally demonstrate traits like planning, learning, reasoning, problem solving, knowledge solving, social intelligence and creativity among others.

Alongside machine learning, there are numerous other approaches used to build AI systems such as evolutionary computation, expert systems etc.

Categories of machine learning

Machine learning is generally divided into the following categories:

- Supervised learning: It typically begins with an established set of data and with a certain understanding of the classification of that data is done and intends to find patterns in data for applying that to an analytics process.

- Unsupervised learning: It is used when the problem needs a large amount of unlabeled data.

- Reinforcement learning: It is a behavioural learning model. The algorithm receives feedback from the data analysis thereby guiding the user to the best outcome.

- Deep learning: It incorporates neural networks in successive layers for learning the data in an iterative manner.

Why is machine learning accelerating?

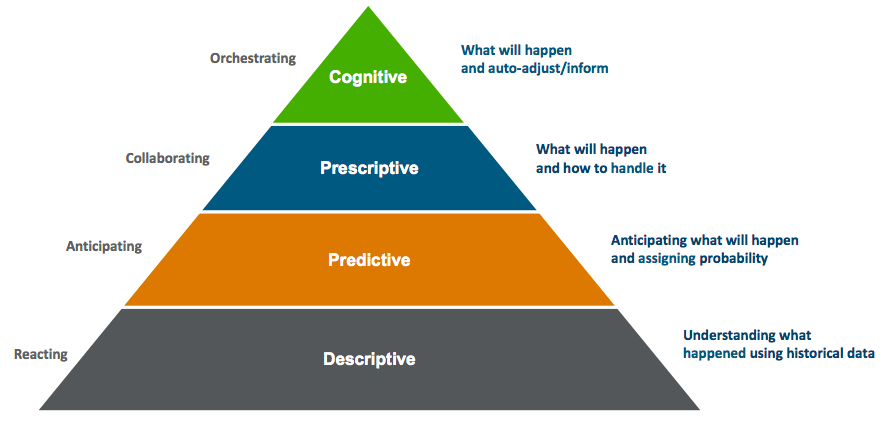

Today, the majority of enterprises require descriptive analytics, that is needed for efficient management, but not sufficient to enhance business performance. For the businesses to scale higher level of responsiveness, they need to move beyond descriptive analytics and move up the intelligence capability pyramid. This is where machine learning plays a key role.

For the businesses to scale higher level of responsiveness, they need to move beyond descriptive analytics and move up the intelligence capability pyramid.

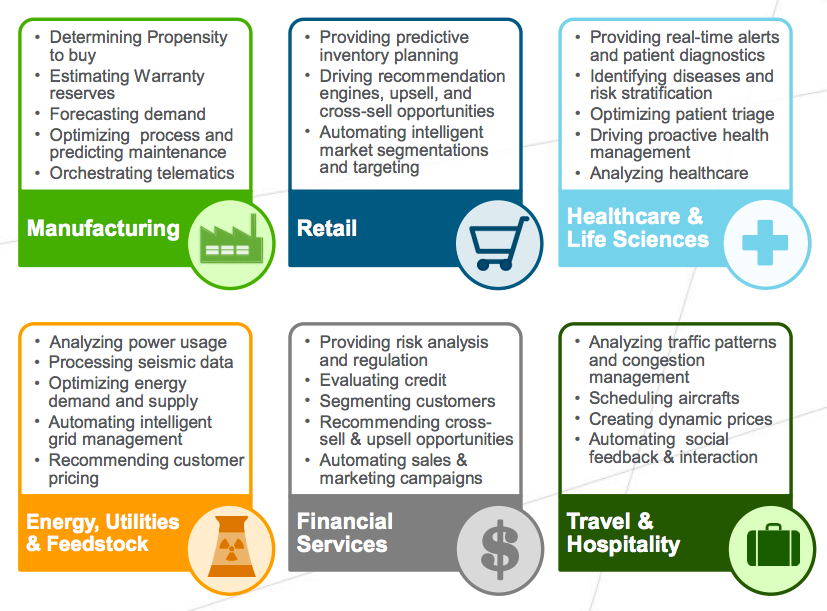

Machine learning is not a new technique but the interest in the field has grown multifold in recent years. For enterprises, machine learning has the ability to scale across a broad range of businesses like manufacturing, financial services, healthcare, retail, travel and many others.

Business processes directly related to revenue-making are among the most-valued applications like sales, contract management, customer service, finance, legal, quality, pricing and order fulfilment.

Exponential data growth with unstructured data like social media posts, connected devices sensing data, competitor and partner pricing and supply chain tracking data among others is one of the reasons of why adoptions rates of machine learning have skyrocketed.

The Internet of Things (IoT) networks, connected devices and embedded systems are generating real-time data which is great for optimising supply chain networks and increasing demand forecast precision.

Another reason why machine learning is successful because of its ability to generate massive data sets through synthetic means like extrapolation and projection of existing historical data to develop realistic simulated data.

Moreover, the economics of safe and secure digital storage and cloud computing are merging to put infrastructure costs into free fall thereby making machine learning more cost effective for all the enterprises.

Machine Learning for Drupal

A session at DrupalCon Baltimore 2017 had a presentation which was useful for machine learning enthusiasts and it did not require any coding experience. It showed how to look at data from the eye view of a machine learning engineer.

It also leveraged deep learning and site content to give Drupal superpowers by making use of same technology that is exploding at Facebook, Google and Amazon.

The demonstration focused on mining Drupal content as the fuel for deep learning. It showed when to use existing ML models or services when to build your own, deployment of ML models and using them in production. It showed free pre-built models and paid services from Amazon, IBM, Microsoft, Google and others.

Drag and drop interface was used for creating, training and deploying a simple ML model to the cloud with the help of Microsoft Azure ML API. Google Speech API was used to turn spoken audio content into the text content to use them with chatbots and virtual assistants. Watson REST API was leveraged to perform sentiment analysis. Google Vision API module was used so that uploaded images can add Face, Logo, and Object Detection. And Microsoft’s ML API was leveraged to automatically build summaries from node content.

Another session at DrupalCon Baltimore 2017 showed how to personalise web content experiences on the basis of subtle elements of a person’s digital persona.

Standard personalisation approaches recommend content on the basis of a person’s profile or the past activity. For instance, if a person is searching for a gym bag, something like this works - “Here are some more gym bags”. Or if he or she is reading about movie reviews, this would work - “Maybe you would like this review of the recently released movie”.

But the demonstration shown at this session had advanced motives. They exhibited Deep Feeling, a proof-of-concept project that utilises machine learning techniques doing better recommendations to the users. This proof-of-concept recommended travel experiences on the basis of kind of things a person shares with the help of Acquia Lift service and Drupal 8.

With the help of Instagram API to access a person’s stream-of-consciousness, the demo showed that their feeds were filtered via a computer-vision API and was used to detect and learn subtle themes about the person’s preferences. Once a notion on what sort of experiences, which the person thinks are worth sharing, is established, then the person’s characteristics were matched against their own databases.

Another presentation held at Bay Area Drupal Camp 2018 explored how the CMS and Drupal Community can put machine learning into practice by leveraging a Drupal module, taxonomy system and Google’s Natural Language Processing API.

Natural language processing concepts like sentiment analysis, entity analysis, topic segmentation, language identification among others were discussed. Numerous natural language processing API alternatives were compared like Google’s natural language processing API, TextRazor, Amazon Comprehend and open source solutions like Datamuse.

It explored use cases by assessing and automatically categorising news articles using Drupal’s taxonomy system. Those categories were merged with the sentiment analysis in order to make a recommendation system for a hypothetical news audience.

Future of Machine learning

A report on Markets and Markets states that the machine learning market size will grow from USD 1.41 Billion in 2017 to USD 8.81 Billion by 2022 at a Compound Annual Growth Rate (CAGR) of 44.1%.

The report further states that the major driving factors for the global machine learning market are the technological advancement and proliferation in data generation. Moreover, increasing demand for intelligent business processes and the aggrandising adoption rates of modern applications are expected to offer opportunities for more growth.

Some of the near-term predictions are:

- Most applications will include machine learning. In a few years, machine learning will become part of almost every other software applications with engineers embedding these capabilities directly into our devices.

- Machine learning as a service (MLaaS) will be a commonplace. More businesses will start using the cloud to offer MLaaS and take advantage of machine learning without making huge hardware investments or training their own algorithms.

- Computers will get good at talking like humans. As technology gets better and better, solutions such as IBM Watson Assistant will learn to communicate endlessly without using code.

- Algorithms will perpetually retrain. In the near future, more ML systems will connect to the internet and constantly retrain on the most relevant information.

- Specialised hardware will be delivering performance breakthroughs. GPUs (Graphics Processing Unit) is advantageous for running ML algorithms as they have a large number of simple cores. AI experts are also leveraging Field-Programmable Gate Arrays (FPGAs) which, at times, can even outclass GPUs.

Conclusion

Whether computers start ruling us someday by gaining superabundance of intelligence is not a likely outcome. Even though it is a possibility which is why it is widely debated whenever artificial intelligence and machine learning is discussed.

On the brighter side, machine learning has a plenitude of scope in making our lives better with its tremendous capabilities of providing unprecedented insights into different matters. And when Drupal and machine learning come together, it is even more exciting as it results in the provision of awesome web experience.

Opensense Labs always strives to fulfil digital transformation endeavours of our partners with a suite of services.

Contact us at [email protected] to know how machine learning can be put to great to use in your Drupal web application.

Subscribe

Related Blogs

What is HTMX & How it Works for Server-Driven Web Interfaces?

“HTML was designed to explain user interactions. HTMX pushes that behavior back where it started, the markup itself." This…

DrupalCon Chicago: Key Product & AI Updates

“The DrupalCon Chicago keynote looks back at Drupal’s 25-year journey while outlining how the platform is evolving. It…

DrupalCamp Delhi Returns After 6 Years: Here’s What to Expect

“After the COVID period, this marks the first time the camp is returning to Delhi. Over the years, the camp and the local…