Ethical design is an integral part of the product development life cycle. The designers have to be well aware of the impact which their products have upon the users lives. Since it can be either good or bad depending upon the different experience the users go through in purchasing the products and services. Keeping in mind the principles, beliefs and morals of the business, the designers should develop products prioritizing users’ safety and security, and building the most valuable, ‘customer trust and loyalty.’ This article can be an eye opener for the people who need to understand the significance of ethical design. This piece of work contains the essential principles of ethical design and the finest practices needed to design responsibly for the potential users.

Are we being careful while designing our products?

Isn’t it every company’s responsibility to recognize that whatever they are offering to the world is adding value to their users lives or not? What if your products are in a way harming them? Are you taking note of it? Well, it completely depends upon the companies that they want to abide by the safety rules and regulations in regards to designing products and services. You will find some companies wasting over time and money, and creating some service that provides unpleasant side effects to their users. At times, certain solutions are also put forward which completely breaks customers trust and loyalty over their mostly preferred brands. Time and again, design dilemmas start with a homogeneous group of people who mindlessly design products, services, or processes without even thinking about the damaging effects it can have upon the consumers.

People tend to lose focus upon the ethical guidelines, and also fail to realize that ethical issues concerning technology don’t just get away after performing some specific tasks. It is necessary to think upon what happens with the software, hardware, devices, or data after being deployed as well. Let’s say, before the release of a product, if you have done extensive testing, there will still be threats that might arise and create major challenges. Therefore, it is always advisable to look after all such possible risks and strive to find the needed solutions. Some of the leading companies are seen finding their ways to resolve the ethical issues by establishing teams and roles which can reflect upon the diverse customer base and gather different perspectives from various industries, ethnic backgrounds, educational experiences, genders and economic backgrounds. They can design new technology-driven products and services, keeping in mind the ethical principles right from the start. It will help them in anticipating and avoiding the issues beforehand.

Are we aware of the principles of ethical design?

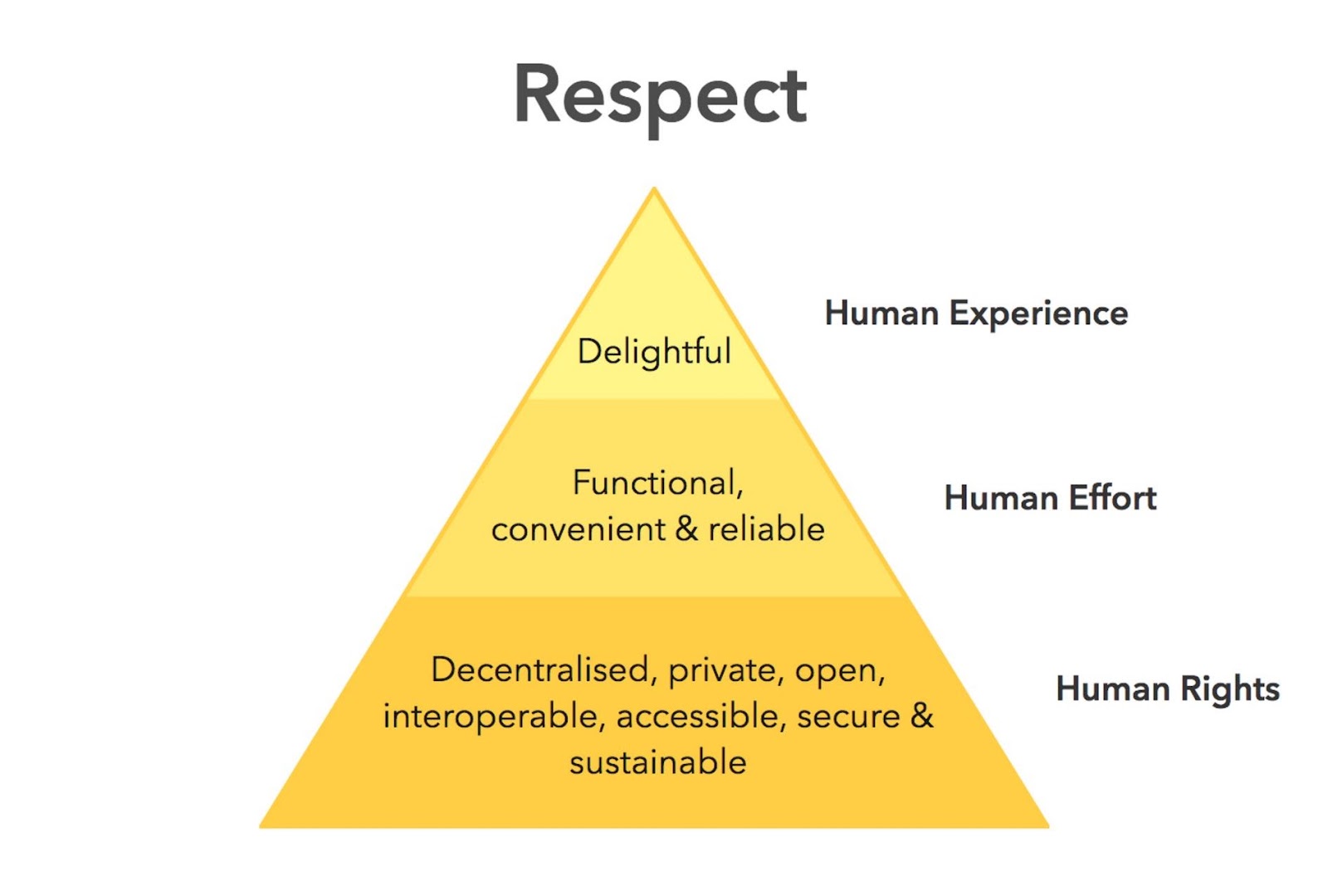

The companies need to prioritize the principles for ethical design and also essentially consider them while designing any product or service. The principles for ethical design are more about respect for human rights, endeavour and experience, and even are motivated by the United Nations Declaration of Human Rights.

Below is the “Ethical Hierarchy of Needs” pyramid built by Aral Balkan, a cyborg rights activist, designer, and developer, and Laura Kalbag, a designer from the UK, and the author of 'Accessibility For Everyone' from A Book Apart. Through this pyramid they are trying to explain the core of ethical design and how every layer of the pyramid rests and depends on the layer underneath it to confirm that the design is purely ethical.

Here we have the principles of ethical design which need to be emphasized upon by the organizations while designing new technology-driven products and services.

Need of usability

Usability can be considered as a basic requirement these days. Therefore, an unusable product is termed as a design failure.Most importantly, design should enable users to accomplish what they exactly want, meet their requirements, and help them use it easily without any concern. Here are five major core components of usability given by Jakob Nielsen of the Nielsen Norman Group.

- Learnability. How easy and simple is it for first-time users?

- Efficiency. How swiftly can users perform tasks?

- Memorability. What kind of experience is for the returning users?

- Errors. What is the number of errors that users make and how serious are these errors?

- Satisfaction. How satisfying is it to utilize the design?

One of the moral obligations of designers is to create products which can be safe and intuitive. Here is an example where usability completely failed. It happened when Samsung’s Galaxy Note 7 spontaneously caught fire. Contrastingly, let me give you another example of where good usability enhances the user’s experience: The American pharmacy Walgreens provide support to their users with a mobile application by sending timely reminders that helps in refilling things such as vitamins, which can be easily ordered through the application by simply scanning the barcode over a prescription bottle. Such minute adjustments in design can immensely influence user experience. More on usability here.

Need of accessibility

Accessibility needs to be incorporated in the development process while a product or service is being designed, and not just at the end. Although products are built for the “targeted consumer”, you need to ensure that nobody is left behind. I am here talking about people with disabilities. For instance, website design is not always optimized for people with vision impairment, in spite of the fact that, at the minimum, 1 billion people are visually impaired, according to the World Health Organization.

With the help of assistive technology, the visually impaired people can use the internet, but there are still some web design flaws which prevent accessibility. Also, some of the common problems that are found among the visually challenged users consist of: areas not accessible through the screen reader, images without alternative text and links or buttons without accessible description.

Learn more about accessibility here:

- How to plan for web accessibility

- The business factor of web accessibility

- Cognitive accessibility in web design

- Design considerations for accessibility

Need of privacy

Privacy issues always stay on the top when it comes to digital design. Talking about Alexa, and its feature of listening to our conversations, then our clicks being monitored by Google and Facebook getting access to our private messages, our privacy concerns seem to be increasing with growing time. So, the finest solution to these ethical concerns can be creating designs that are only able to collect personal information which is in the best interest of the consumers. For instance, Signal is a secure messenger application particularly designed to protect the privacy of the users. When you sign up, it will only ask for your phone number as that’s all is required to get started with the application. So, in this way the companies can design products prioritizing the users privacy and build the most essential customer trust and loyalty.

Need of transparency and clarity

One of the best practices for ethical design is to facilitate the users with transparency, enabling them to make proper choices that includes delivering clear means for users to withdraw from memberships easily. For instance, on Amazon you get the facility of free shipping if you happen to do a trial of Amazon Prime. Although, once your free trial is up, Amazon will charge you for the complete cost of the annual membership automatically, unless you cancel manually and there isn’t any notification and warning before they charge you.

Need of user participation

Since all the designs are being prepared for the users, won’t it be a good idea to take the users opinion in design decisions, requirements and ideas. Such a user involvement proves to be very beneficial for the user, designer and the design process as well. One of the effective ways of analyzing the user involvement can be by holding small groups of user testing that will reveal you the flaws, allowing you to revise and test the design again. In this way, the user experience can be enhanced in a much better way.

Need to understand user preference

It’s good if the designers understand the fact that whichever tool or service they create is simply a very small part of a user’s life and also that at times, the users do need a break. Basically, what I meant is the products should be available when the users have a requirement and stay out of their way when at times, they don’t need them. You can see that YouTube and Netflix have made it quite easy for the users to binge watch with their auto-play function. Also, Facebook has designed such a platform which tends to exploit its users by using a “social-validation feedback loop” that can create excitement among its users to be indulged in the features such as likes or comments, allowing them to repost or check for new notifications.

Learn more:

- User centered design approach: Principles and methods

- Building user trust in UX design

- How to do user research without any direct access to users

Need to focus on sustainability

As we all know that climate change is a global issue, so now it’s the right time for the designers to consider the influence of their work upon the world’s environment, climate and resources. Well, one amazing example of an ethical design trend supporting sustainability is circular design that utilizes a closed loop design strategy where resources are repurposed continuously.

Instead of designing products and services which have a linear lifecycle with a start, a middle and an end, the intent should be to design products which are spontaneously cycled in numerous forms, followed by a reuse and recycle loop resulting in lesser waste. Most of the companies are adopting circular designs such as 57st. design who make modular furniture, AMP Robotics who program more efficient recycling robots, and PlasticRoad that recycles plastic into modular road-building blocks.

Best practices to design responsibly and ethics in focus

In this section, I will now disclose some of the best practices that help in designing responsibly, keeping in mind the ethical values and principles.

Creating proper research

Design research consists of a wide analysis of how exactly your tech may be weaponized for abuse as well as specific perception into the experiences of survivors and offenders of that kind of abuse. At this particular stage, the potential team will investigate problems of interpersonal harm and abuse, exploring any other safety or inclusivity concerns which may be a concern for your product or service, such as data security, racist algorithms, and harassment.

- Extensive research

If you can start researching on products and issues around safety and ethical concerns which have already been reported with a wide and general research respective, it can be really very beneficial. For instance, a group of members designing a smart home device would do pretty well to recognize the multitude of means which presently smart home devices have been used as tools of abuse. If your product involves AI, then you will have to realize the potential for racism and other concerns which have been reported in existing AI products. Almost all kinds of technology have some type of potential or even actual harm which has been reported over the news. Google Scholar can be termed as a useful tool for finding these studies.

- Having a word with survivors

It is always preferable to take an interview of the advocates who are working in the space of your research first so that you can gain a better understanding and clarity of the abuse that will further prepare you to have a smooth interaction with the survivor. While interviewing a survivor, make sure that they are being paid for their knowledge and shared experiences. Asking them to share their experiences for free can be really exploitative. Again, it is also possible that some survivors might not want to be paid, therefore you need to make the offer in the very first conversation itself. And, one more convenient way to make a payment can be by donating to an organization who is actively working against the kind of violence which the interviewee witnessed.

- Having a word with abusers

It can be very helpful if you can connect with an abuser and get to know about some important facts like how they weaponize technology to use against someone, how they cover their tracks, and how they rationalize or explain the abuse. This will help you to be more careful about your design safety and security, allowing you to be more prepared to build better threat or risk-free products and services.

Building Archetypes

After finishing your research, you need to use your insights in order to create abuser and survivor archetypes. Archetypes cannot be considered as personas, since they are not based upon real people whom you surveyed and interviewed. Rather, they are based upon your research into likely safety concerns, like at the time of designing for accessibility: where we do not necessarily need to have a group of visually challenged users in our interview session to build a design that’s inclusive of them. In fact, we tend to build those designs depending on existing research into what this group requires. Let me here tell you about personas. So, basically personas represent real users, including many details, whereas archetypes are wider and can be mostly generalized.

Now, let’s discuss an abuser and survivor archetype. So, the abuser archetype is someone who looks at a product as a tool to perform harm or damage. Such abusers might try to cause harm to someone whom they don’t even know via surveillance or anonymous harassment, or may even try to monitor, control, abuse, or torture someone whom they personally know. Next, the survivor archetype is a person who is being abused with the product. There are numerous situations to consider with regard to the archetype’s understanding of the abuse and how to stop it. Do the survivors require any proof of abuse which they already suspect to be happening, or are they not aware of the fact that they have been targeted and need to be conscious about it?

You might want to create various survivor archetypes to capture a wide range of different experiences. The survivors might know that the abuse is taking place but are unable to stop it, for example, an abuser may lock them out of IoT devices, which they are aware of but at the same time do not know how a stalker keeps tracking out their location. You can include as many as scenarios you want in your survivor archetype. These can be later on used while designing solutions to allow your survivor archetypes accomplish their goals of stopping and ending abuse.

Brainstorming issues

Once you finish creating archetypes, you simply need to brainstorm novel abuse cases and safety concerns. What I meant by “novel” is things that aren’t found in your research; and you are trying to recognize entirely new safety concerns which are distinctive to your product or service. So, the goal of this step is to exhaust each and every effort of recognizing harms or damage your product could cause. Then the next step is all about planning on how to stop the harm caused to the users. You will have to sit with your team for some hours and closely look at some important aspects such as how can your product be used for any type of abuse, apart from what you’ve recognized already in your research work?

So, after completing the above two steps, you still might not be satisfied with identifying all the possible abuses your product can cause. And, it's completely fine to feel that way. The most important thing here is that you gave your best and shall look forward to prioritizing design safety for the future as well. And, after your product gets released, it's very normal for the users to identify new problems which you missed on, just try taking the feedback politely and resolve it as soon as possible.

Designing best solutions

It is preferable to have a list of ways in which your product can be utilized for damage as well as abuser and survivor archetypes explaining opposing user goals. Then the next step is to recognize means or methods to design against the identified abuser’s goals and to provide support to the survivor’s goals. Below are some of the questions you can ask yourself to help prevent damage and provide support to your archetypes.

- Will you be able to design your product in a manner that the identified damage cannot occur in the first place? If not, then what kind of roadblocks can you put up to stop the damage from happening?

- How will you be able to make the victim realize that abuse is taking place through your product?

- How will you be able to make the victim understand what they should do to end this problem?

- Will you be able to identify any kinds of user activity which would point out some form of damage or abuse? Is it possible that your product can help the user access support?

Finally, safety testing

The last step is to test your prototypes from the perspective of your archetypes: the person who wishes to weaponize the product for damage and the victim of the damage who needs to recover the technology. Like any other type of product testing, you will focus to rigorously test out your safety solutions in order to recognize gaps and correct them, validate that your designs will be able to provide safety to your users, allowing you to feel much confident after launching your product to the world.

Usually, safety testing takes place along with usability testing. You might look forward to conducting safety testing on either your final prototype or the actual product if it has already been launched. Keep in mind that testing for safety involves testing from the outlook of both a survivor and an abuser, although it might not look right to do the both. On the other hand, if you created multiple survivor archetypes in order to capture multiple scenarios, you will have to test from the outlook of each one.

- Testing a survivor

This particular testing helps you to realize how easy it can be for somebody to weaponize your product for damage. Unlike with usability testing, you tend to make it difficult for the abusers to accomplish their goal. You can take reference from the goals in the abuser archetype you built earlier, and utilize your product in a way to attain them.

- Testing an abuser

This particular testing involves recognizing how to provide information and support to the survivor. By opposing the attempt made by an abuser in order to stalk somebody also can satisfy the goal of the survivor archetype i.e., not to be stalked, therefore, separate testing won’t be required from the survivor’s opinion.

- Stress testing

This particular testing helps in making your product more compassionate and inclusive. Basically, the concept is taken from Design for Real Life by Eric Meyer and Sara Wachter-Boettcher. The authors addressed that personas generally center people who are seen having a good day, but real users are seen stressed out, anxious, having a troublesome day, and in fact experiencing suffering. These are known as “stress cases,” and if your products are tested in a stress-case scenario for users, it can help you to recognize places where there is insufficient design compassion.

A study by World Economic Forum

Now, you can take a look at this white paper published by the World Economic Forum on the responsible use of technology. This paper suggests a new framework that provides practical steps which companies can adopt towards being more responsible for ethical thinking and implementing it in each stage of the technology product life cycle.

Recognizing ethical and human rights influence

The very first step you can take towards solving any issue is to get aware of it and also identify that it does exist and need to be addressed. You will see that industries which are engaged in disruptive technologies are ignorant of the fact that they have major negative influence on the world. Therefore, companies need to essentially understand the ethical and human rights impacts and, if they are unable to identify such impacts, they must hire employees or consultants who are experts and can provide the required amount of advice and suggestions. Also, a human rights policy can successfully form the basis for raising human rights awareness among staff and employees, also offering training with ethics and human rights experts. The recent ban on YouTube’s live streamed broadcasts by children “unless they are clearly accompanied by an adult” is a good example of an effort to stop potential negative ethical or human rights implications. This Google-owned company has started instituting new protection mechanisms, which includes AI‑classifiers that can find and also remove content.

Stakeholders involvement

The responsible design and development of technology need to include active participation of stakeholders and rightsholders. Recognizing the suitable populations, particularly vulnerable and marginalized groups that might be influenced by the technology, and creating engagement into the early phases of the life cycle of the technology enables organizations to integrate findings and make suitable adjustments to the design of the product to reduce risks and increase opportunities for positive influence. Some of the frameworks like “human rights by design”, “responsible innovation”, “human‑centered design”, and “value sensitive design” offer roadmaps for how to recognize potential stakeholders and rights holders throughout the design procedure, considering their choices, interests and suitably engaging them to understand their rights and values which might be implicated by the technology usage.

Accountability and clarity

Apart from direct engagement, various technical approaches have appeared in response to issues or concerns about discrimination, bias and a lack of clear decision‑making procedures in AI. The utilization of diverse and representative data sets can help in reducing bias and discrimination in algorithms, and tools like Accenture’s fairness toolkit and IBM’s AI Fairness 360 help organizations to work for delivering fair results and outcomes.

Case Studies

Here are some case studies to give you a better understanding in regards to ethical design and safety.

In the year 2019, Twitter announced that it was looking for public feedback on a draft set of rules to govern how it would handle synthetic and manipulated media. The early drafts of the policy consisted of possibilities like placing a notice next to these tweets, warning users before they liked or shared tweets, and adding links to additional contextual information, the first adopted version of the policy outlined a rubric for handling tweets containing manipulated media based mainly on three criteria: whether they included synthetic or manipulated media, whether they were shared “in a deceptive manner”, and whether the contents were possibly to affect public safety or “cause serious harm”. Even apart from adding context to such tweets or labelling, Twitter confirmed that tweets meeting certain combinations of these criteria were possibly to have their visibility lessened or would be completely deleted from the platform.

Twitter’s efforts with reference to synthetic and manipulated media properly aligned with the three major areas of Safety by Design. For instance, by circulating their draft rule and diligently looking for public input through an online form (that was made available in English, Hindi, Japanese, Portuguese, Spanish and Arabic) and publishing some of the results of that survey while announcing the new rule, Twitter offered necessary transparency regarding their decision-making procedure in designing the new rule. Likewise, by providing additional context and labelling around manipulated media, instead of removing it, Twitter helped in preserving a space for relevant expression and commentary, at the same time offering users important autonomy in how they communicated with tweets. Lastly, by directly flagging manipulated media around the platform’s user interface, Twitter has actively taken responsibility for recognizing and pointing out to (or away from) manipulated media on its platform.

Zoom

In accordance with Zoom’s April announcement, a number of significant measures were introduced by the company in order to address the security and privacy concerns. Zoom even announced a freeze in new feature development and shifted all engineering resources to address security and privacy concerns. It conducted an extensive review with third-party experts and setup a CISO council, also providing a series of training sessions, tutorials, free interactive regular webinars to users, and has taken initiatives to reduce support wait times so that users could be authorized to use the different settings provided within the product to establish more safer meetings. The features consist of:

- Limiting the attendance for participants who are signed in to the meeting using the email listed in the meeting invited.

- Establishing a waiting room function.

- Facility of password protecting meeting access.

- Facility to lock meetings once they start.

- Facility to mute participants who are not presenting.

- Facility to remove unwanted participants.

- Facility to disable private chat.

Zoom is making a transparency report to detail the information in regards to requests for data, records and content. It’s chief executive officer has hosted weekly webinars to answer queries from the community.

Check out this informative video on design safety.

Final thoughts

So, are you ready to be responsible enough with your designs? I suppose this article has helped you in taking your design ethics much seriously and inspired you to become trustworthy to your prospective consumers. And trust me, by adopting an ethical technology mindset, you can foresee and strongly respond back to any ethical challenges arising over time.

Subscribe

Related Blogs

UX Best Practices for Website Integrations

Website Integrations determine whether users stay engaged or abandon a site. I experienced this firsthand with a delivery…

How design thinking acts as a problem solving strategy?

The concept of design thinking is gaining popularity these days since people across different industries are using it as a…

10 major challenges that come across during an agile transformation

It’s no longer a mystery that agile was created as a response to the various concerns that the traditional waterfall…