The definition of cloud-native varies explicitly and when blended with a continuous delivery (CD) paradigm has revolutionized the modern application development model. Organizations, small or large, are implementing the cloud-native architecture for their development environment in order to make the process of building an operating software efficiently.

The rapid switch to the cloud-native world has led organizations to work head over heels to build, test and release code quickly. Continuous delivery has come as an aid and has escalated the frequent release of a software product. However, as it is known to everybody nothing comes easy in life, embracing continuous delivery for cloud-native brought many associated challenges. We will be discussing the challenges, their respective solutions, and considerations for following continuous delivery to cloud-native in this article. But, at the very first let's start with the issues.

The associated challenges

- The release process complexity gets increased: Earlier to cloud-native, assisting the release process of a single monolithic application was simplified. Contrastly, the process turned out to be more complex with the up-gradation to complete cloud-native on continuous delivery. The reason being, now the engineers had to handle and manage the release of dozens of microservices processes and also the dependency with the addition of more layers to the stack is also worth consideration.

- Maintaining speed is essential: Speed is the major factor associated with continuous delivery. Along with it acquiring stability of the complex system while commandeering with a cloud-native strategy is also imperative.

- Expansion of developers' responsibilities: For the purpose of matching the speed of development, automation is applied. Automation solved the issues with speed but eventually raised developers' concerns. It expanded the roles of the developers who contribute to automation.

So as to keep the application run more close to the developers, the cloud-native application puts the configuration into code. Sequentially, increasing the responsibility for developers to run and deploy their application.

Solutions to follow

- Continual experimentation: With the aim to match the complexity, experimentation on the cloud-native environments is imperative. Organizations can approach with canary releases, feature toggles, blue-green deployments, A/B tests or the combinations of the formerly mentioned processes to test and measure the excellence of the continuous delivery pipeline. Sequentially, it will make the continuous delivery process streamlined and examine the features with speed so as to enhance the customer experience.

- Managing speed through automation: The base for successful orchestration is automation. It ultimately leads to the upscale functioning continuous delivery pipeline. Thereby, it has been suggested that in order to deal with increased speed, automation should be a major.

- Short Automation cycle: The increase in the roles and responsibilities of the developers can be characterized by the IT environment concept wherein the process becomes so automated and abstracted that there is no requirement of in-house management and measuring, termed as the ‘no-ops’ approach’. To sail smooth, the blending of the soiled functions into a short automated lifecycle will resolve the issues with the developers.

Use Case: Efficient continuous delivery pipeline on cloud-native

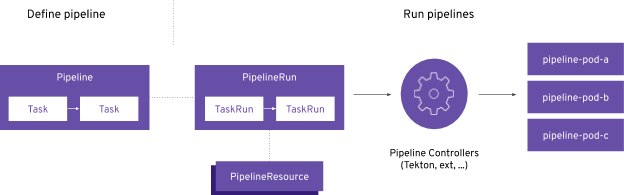

Based on the Tekton project, the OpenShift pipelines can be installed on the cluster via an operator through Red Hat OpenShift 4.1 OperatorHub. It enables the creation of Kubernetes-style delivery pipelines. OpenShift pipelines allow developers to own and control the complete microservices lifecycle with no dependency on the central teams to maintain and manage the continuous integration (CI) server, plugins, and its configurations.

Besides, Kubernetes style pipelines, OpenShift facilitates serverless creation and running of pipelines, multi-platform deployment (Kubernetes, VMs and other serverless platforms), building images with the Kubernetes tools (Buildah and Dockerfiles, Jib, Kaniko), development of command-line developers tool for pipelines interaction along with OpenShift developer console and IDF plugins).

Kubernetes custom resources (CRD) are the base for the creation of continuous integration/continuous delivery pipelines. Below points gives a brief idea about the same:

- Task: Series of commands or steps that run in separate containers in a pod.

- Pipeline: Collection of tasks executed in a defined order.

- PieplineResource: Inputs (git repo) and outputs (image registry) for the pipeline.

- TaskRun: Runtime depiction of task execution.

- PipelineRun: Runtime representation of a piepline execution.

Final Note:

Organizations are completely abiding by to deliver the best to their clients or customers. In the process for the same, they are shifting towards cloud-native at a pace. When combined with the continuous delivery, cloud-native has emerged as a reform for the application development process. Increasing speed, complexity and responsibilities are the challenges that came alongside. For the purpose of solving the earlier mentioned issues, a decentralized approach with process and practice sharing is to be followed. Tools like OpenShift Pipelines have emegerd as an aid to the continuous delivery process.

What's your take on this? Share your views on our social channels: Facebook, LinkedIn, and Twitter.

Subscribe

Related Blogs

Serverless vs Managed Services: Which One to Choose

When you decide to build an application in the cloud, you need to consider several factors. One of the most important…

Over the past few years, the cloud industry has gone through an extreme change with the transformation of serverless…

Putting The Serverless Trend Under a Microscope

Flexible. Scalable. Economical. These terms essentially sum up the advantages of serverless computing, an architecture that…